Tailored Generative AI Development Solutions

Move from stuck pilot to production-ready Gen AI without burning another quarter.

Most Gen AI projects stall after the demo. The model works in isolation, then breaks the moment it meets real data volumes, real users, and real compliance requirements.

We build custom generative AI solutions that survive that handoff. Production-grade LLM systems, AI agents, and RAG pipelines, scoped honestly and shipped on time.

End-to-End Generative AI Development Services

Each service maps to a real problem we have shipped a fix for.

Custom LLM Development

Fine-tuned and instruction-tuned language models for your domain, your data, and your privacy line.

- Domain-specific fine-tuning with LoRA, QLoRA, and PEFT methods

- Instruction tuning on your proprietary support tickets, contracts, or operational data

- Private model hosting for regulated industries that cannot send data to public APIs

- Model evaluation harnesses so you can compare GPT, Claude, Llama, and Mistral on your actual workload

RAG Pipeline Development

Retrieval-augmented generation systems that answer from your documents, not Wikipedia.

- Hybrid retrieval with semantic and keyword search for higher accuracy on niche queries

- Vector database setup across Pinecone, Weaviate, Qdrant, or self-hosted pgvector

- Re-ranking layers and chunk optimization that cut hallucination rates measurably

- Citation and source tracking so every answer is auditable back to the original document

AI Agent Development

Multi-step autonomous agents that plan, call tools, and execute. Built with code execution rather than long tool-call chains, so they actually scale.

- Tool-using agents with MCP (Model Context Protocol) for safe, logged tool access

- Multi-agent systems where a planner agent and an execution agent split responsibilities for cost and reliability

- Workflow agents for customer support, document processing, and back-office automation

- Voice agents with sub-second response times for real-time scenarios

LLM-Powered Chatbots and Co-Pilots

Conversational interfaces that handle context, memory, and edge cases. Not generic FAQ bots.

- Customer support chatbots with escalation logic, ticket categorisation, and CRM integration

- Internal co-pilots for sales, engineering, and operations teams, grounded in your knowledge base

- Multilingual deployment with consistent tone and terminology across markets

- Platform-agnostic delivery across web, Slack, Teams, WhatsApp, and your internal tools

Image and Video Generation

Generative pipelines for product photography, marketing assets, and creative workflows.

- Stable Diffusion and Flux pipelines with ComfyUI for production-grade image generation

- LoRA fine-tuning on your brand assets for consistent visual output

- ControlNet and inpainting for precise edits and product variant generation

- Video and audio generation with TTS, voice cloning, lip-sync, and Whisper-based transcription

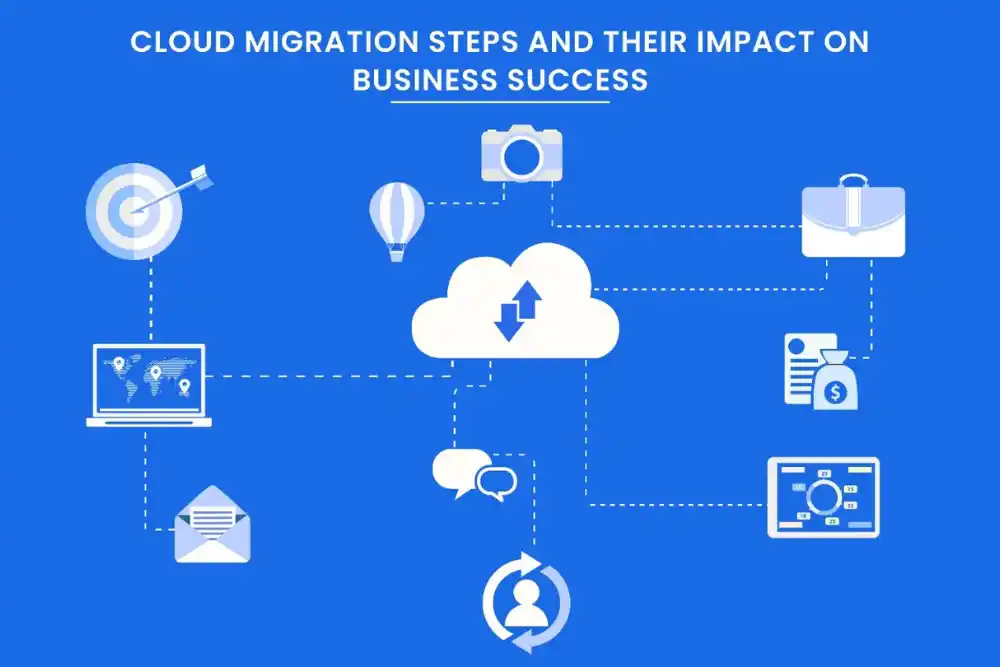

Generative AI Integration and Modernisation

Connecting Gen AI to systems that were not built with it in mind. The unglamorous work that determines whether the model ever reaches a user.

- API and middleware development between LLMs and your existing CRM, ERP, or core platform

- Data pipeline construction with proper governance, lineage, and versioning

- Legacy system Gen AI overlays where the underlying stack cannot be replaced but can be augmented

- Real-time monitoring for output quality, drift, latency, and per-call cost

Trusted and recognized across the industry

How TAK Devs Works

Process diagrams look the same at every agency. What matters is what actually happens inside each phase. Here is how we work in practice:

Discovery Call

A focused conversation to understand your goals, challenges, and vision. We ask the right questions to uncover what you truly need — before a single line of code is written.

Scoping Workshop

We translate your goals into a clear, actionable plan. Features are prioritised, timelines are set, and everyone aligns on what success looks like eliminating guesswork from day one.

Sprint Delivery

We build in short, focused cycles, shipping real, working software every sprint. You see progress continuously, give feedback early, and stay in control of where the product is heading.

Launch & Handoff

Your product goes live with confidence. We handle deployment, documentation, and knowledge transfer, ensuring your team is fully equipped to own and operate what we built together.

Ongoing Support

Our relationship doesn't end at launch. We monitor, maintain, and improve your product over time, fixing issues fast and helping you evolve as your users and business grow.

Struggling to keep up with development demands?

See how we can streamline your workflow.

No commitment required | Takes 20 minutes !

WHO WE WORK WITH

CTOs Watching Pilots

Quietly Fail

The AI roadmap was approved 18 months ago. Two pilots ran. Neither made it to production. Nobody at the board level wants to say it out loud. Model selection was made by feel, not benchmark, and now you cannot defend the choice Compliance review blocked the launch and the team has no audit trail The internal team has the skill for one part of the stack but not the LLM ops layer

Founders Burning Runway

You shipped a Gen AI prototype to investors. Now you need it to handle 10,000 users without the costs ballooning past your Series A. Vendor disappeared after the deposit and your demo is six months stale Token costs are eating the unit economics and nobody can explain why The prototype hallucinates on real customer data even though it passed the demo

Ops Leaders Paying for Manual Work

You know three headcount are doing what one well-built Gen AI integration could handle. You also know proving that to procurement is the hard part. Manual document review consumes hours per case across the team Customer support tickets repeat the same five questions and nothing learns from them Internal knowledge lives in PDFs, Slack threads, and one engineer's head

TAK Devs vs. Alternatives

Why dedicated Generative AI experts deliver better results than traditional development teams.

| Capability | TAK Devs | In-House Team | Generic Vendor |

|---|---|---|---|

| AI Model Expertise | LLMs + Custom AI Workflows | Limited internal exposure | General AI implementation |

| Deployment Speed | Rapid MVP to Production | Long hiring cycles | Slow onboarding process |

| Scalable AI Architecture | Production-ready systems | Depends on internal expertise | Basic infrastructure focus |

| Prompt Engineering | Advanced optimisation | Experimental approach | Template-based prompts |

| AI Integration Capability | CRM + ERP + APIs | Requires multiple teams | Limited integrations |

| Security & Governance | Enterprise-grade controls | Varies by company | Often overlooked |

| Optimization & Monitoring | Continuous AI tuning | Manual maintenance | Minimal post-launch support |

| Business Impact Focus | ROI-driven AI solutions | Internal operational focus | Feature-driven delivery |

Industries We Have Built For

We list these not to claim expertise across everything, but to be specific about where we have direct experience. Domain knowledge matters because understanding the compliance constraints of healthcare or the latency requirements of financial systems shapes architecture decisions that general experience misses.

Legal Technology

- Case workflow automation

- Secure document management

- AI legal research

- Compliance tracking systems

Health Tech

- Patient data management

- Telehealth platform integration

- Electronic health records

- Healthcare analytics tools

AUTOMOTIVE & MOBILITY

- Fleet management systems

- Connected vehicle solutions

- Mobility app development

- Predictive maintenance tools

Retail & E-commerce

- Omnichannel shopping experience

- Inventory management systems

- Conversion rate optimization

- Personalized product recommendations

Consulting Providers

- Data-driven decision making

- Business process automation

- Client collaboration tools

- Performance tracking dashboards

Travel & Hospitality

- Online booking systems

- Guest experience optimization

- Property management software

- Dynamic pricing solutions

Why Teams Pick TAK DEVs

Production First, Not Demo First

We optimise for the system that runs in week 26, not the demo that closes the deal. That means observability, retries, idempotency, and rollback paths from day one.

Honest Scoping

If your idea will not work, we tell you in the discovery call. If it will work but costs more than the value it creates, we tell you that too. We turn down work that should not be built.

Compliance

Built In

GDPR, HIPAA, SOC 2, and EU AI Act readiness are part of the design phase, not an afterthought before launch. NDA on day one. Data residency options discussed before code is written.

Cost Discipline

Per-call cost monitoring, prompt-caching strategies, and model selection that matches the task. We have cut token spend by 90 percent on systems that previously used a flagship model for every step.

Testimonials

Frequently Asked Questions

What are custom generative AI development services?

Custom generative AI development services are end-to-end engineering engagements that design, build, and deploy generative AI solutions tailored to a specific business problem rather than off-the-shelf tools.

They typically include use-case definition, data preparation, model selection or fine-tuning, system integration, deployment, and ongoing model monitoring.

How long does it take to build a generative AI solution?

A standard production-ready Gen AI build takes 12 to 16 weeks at TAK DEVs.

- Proof of concept: 3 to 8 weeks

- Production MVP: 10 to 14 weeks

Full enterprise integration: 4 to 6 months depending on legacy system complexity

How much does custom generative AI development cost?

Cost depends on scope, model choice, and integration depth. As reference points from recent TAK DEVs engagements:

- Proof of concept: fixed-price from a defined scoping workshop

- Production MVP: scoped per use case after discovery, with clear deliverables per sprint

- Per-call runtime cost: well-architected systems run between a few cents and a few dollars per complete user interaction

We share full cost projections during the scoping workshop, not after the contract is signed.

What is the difference between generative AI and traditional AI?

Traditional AI classifies, predicts, or detects based on existing data. Generative AI creates new content such as text, images, code, audio, or video based on what it has learned.

For most businesses, the practical difference is that generative AI can automate tasks that previously required human creation, such as drafting responses, summarising documents, or generating product descriptions.

Do you sign NDAs and handle sensitive data?

Yes. We sign mutual NDAs before the discovery call when requested. We work under GDPR, HIPAA, and SOC 2 controls.

- Private model deployment for clients who cannot send data to public APIs

- Data residency options across EU, US, and UK regions

Audit logs on every model call for regulated industries

Will my generative AI solution work after launch, or will it drift?

Model drift is real. We design for it from day one.

- Per-call monitoring tracks output quality, latency, and cost in real time

- Regression test suites catch quality drops before users do

- Versioned prompts and tool contracts let us update one part without breaking another

Scheduled retraining for fine-tuned models when your domain data evolves

Can you fix a generative AI project that another vendor started?

Yes. Vendor handovers are roughly a third of our incoming work.

We start with a technical audit of what was built, what was promised, and what is actually working. You get a clear written assessment, then a decision between fixing in place or rebuilding the parts that cannot be salvaged.

Which generative AI models do you use, and how do you choose?

We are model-agnostic. The right model depends on accuracy needs, latency budget, privacy line, and per-call cost.

- For most enterprise text use cases: Claude, GPT-4o, or fine-tuned Llama 3

- For private deployment: Llama 3, Mistral, or Qwen self-hosted

- For image generation: Stable Diffusion or Flux with LoRA fine-tuning

- For voice and audio: Whisper, ElevenLabs, or custom TTS

We benchmark two or three options on your actual data during the discovery phase, then commit to one.

What happens if the proof of concept fails?

You get a written report explaining why, what was tested, and what alternative approaches exist. You do not pay for production work that was never scoped.

In roughly one in five discovery engagements, our recommendation is not to build. We say so plainly. The cost of that honesty is paid back in projects we never have to undo.

Do you offer post-launch support and maintenance?

Yes. Support is offered under a separate SLA-backed agreement after launch.

- Model retraining and prompt tuning on a defined cadence

- Cost optimisation reviews quarterly to keep token spend in check

Incident response with response time tied to severity

Where is TAK DEVs based, and do you work with international clients?

TAK DEVs delivers globally. We work with clients across the United States, United Kingdom, European Union, Middle East, and Asia-Pacific.

Engagements run remotely with overlapping working hours adjusted to your timezone. For US and UK clients, we keep at least 4 hours of daily overlap with your team.

How is generative AI development different from traditional software development?

Traditional software is deterministic. Generative AI is probabilistic. That changes how you test, deploy, and maintain it.

- Testing requires evaluation harnesses and human review, not just unit tests

- Deployment requires version control over prompts, models, and tool contracts together

- Maintenance requires watching for drift, cost spikes, and edge cases that only show up at scale

We bring traditional software engineering discipline to a non-deterministic medium. That is the gap most agencies miss.

Can you integrate generative AI with our existing systems?

Yes. Integration is most of the work in any real Gen AI project.

- CRM systems: Salesforce, HubSpot, Zoho, Pipedrive

- ERP systems: SAP, NetSuite, Oracle, Microsoft Dynamics

- Communication: Slack, Teams, WhatsApp Business, Twilio

- Data platforms: Snowflake, Databricks, BigQuery

Custom internal tools: via REST, GraphQL, or direct database connectors